Due to the Energy Transition, the use of power transmission and distribution grids is changing. The control architecture of power grids needs to be swiftly adapted to take account of infeed at lower grid levels, higher dynamics in flow patterns, and more distributed controls (both internal controls and grid flexibility services from third parties). In this context, TSOs and DSOs require a new generation of Digital Substation Automation Systems (DSAS) that could provide more complex, dynamic, and adaptative automation functions at grid nodes and edge, as well as enhanced orchestration from central systems, in both flexible and scalable manner. Virtualization is seen as a key innovation in order to fulfill these needs.

SEAPATH, Software Enabled Automation Platform and Artifacts (THerein), aims at developing a “reference design” and “industrial grade” open source real-time platform that can run virtualized automation and protection applications (for the power grid industry in the first place and potentially beyond). This platform is intended to host multi-provider applications.

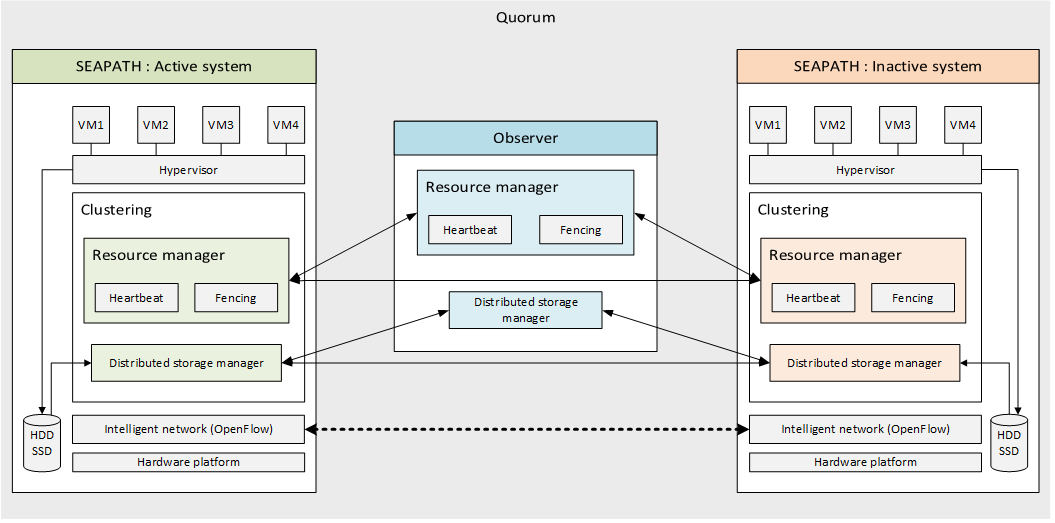

Due to the nature of the virtualized applications, whose function is to regulate, control, command and transmit information relating to the operation, management and maintenance of an electrical substation, the virtualization base must meet the challenges of reliability, performance and availability.

Features

- Hosting of virtualization systems: an heterogeneous variety of virtual machines can be installed and managed on the platform.

- High availability and clustering: machines on the cluster are externally monitored in order to guarantee the high availability in case of hardware or software failures.

- Distributed storage: data and disk images from the virtual machines are replicated and synchronized in order to guarantee its integrity and availability on the cluster.

- Intelligent virtual network: the virtualisation platform is capable of configuring and managing the network traffic in a data layer level.

- Administration: system can be easily configured and managed from a remote machine connected to the network as well as by an administrator on site.

- Automatic update: the virtualisation platform can be automatically updated from a remote server.

SEAPATH architecture

The virtualisation platform uses the following open source tools:

- QEMU: Emulator and virtualizer that can perform hardware virtualization.

- KVM: Linux module that offers virtualization extensions so the machine is capable of functioning as a hypervisor.

- Libvirt: virtualization API and management tool used to configure virtual machines simulated hardware.

- Pacemaker: High availability resource manager that offers clustering functionalities. It is used in combination with its plugins Corosync + STONITH.

- Ceph: Scalable distributed-storage tool that offers persistent storage within a cluster.

- Open vSwitch: Multilayer virtual switch designed to manage massive network automation in virtualization environments.

Ansible: Infrastructure management tool that simplifies orchestration of machines through declarative configuration.

A Yocto distribution

The Yocto Project is a Linux Foundation collaborative open source project whose goal is to produce tools and processes that enable the creation of Linux distributions for embedded and IoT software that are independent of the underlying architecture of the embedded hardware.

The Yocto Project provides interoperable tools, metadata, and processes that enable the rapid, repeatable development of Linux-based embedded systems in which every aspect of the development process can be customized.

The Layer Model simultaneously supports collaboration and customization. Layers are repositories that contain related sets of instructions that tell the OpenEmbedded build system what to do. You can collaborate, share, and reuse layers.

Layers can contain changes to previous instructions or settings at any time. This powerful override capability is what allows you to customize previously supplied collaborative or community layers to suit your product requirements.

How is high availability ensured in the cluster?

- Resource management: communication between the different machines within a group is managed by Pacemaker and two of its plugins. Corosync is used for the intercluster communication and the Heartbeat mechanism while STONITH implements the fencing system.

- Distributed storage: All data written to disks is replicated and synchronised within the group members by using the CEPH tool. Note that from a user point of view only a single instance of each VM can be started on the system at the same time.

- Intelligent network: The different machines on the cluster are connected on the layer level (OSI model) using OpenVSwitch and DPDK for its administration and management.